Johnny Carson’s Life-Changing Lesson: How a 16-Year-Old Girl Revolutionized The Tonight Show

Johnny Carson asked a 16-year-old blind girl in his audience what she thought of the show. Her answer made him forget his script, stop the taping, and completely change how The Tonight Show was produced for the next decade. It was October 23rd, 1982. The Tonight Show was taping its Friday night episode at NBC’s Burbank Studios.

Johnny Carson had just finished his monologue to thunderous applause. As he settled behind his desk to begin the audience Q and A segment, his eyes swept across the crowd. That’s when he noticed something unusual in the fourth row. A beautiful golden retriever sat perfectly still at the feet of a teenage girl wearing the distinctive harness of a guide dog.

The girl wore dark sunglasses despite the indoor setting. She sat between her parents, her hands resting on the dog’s head, a peaceful smile on her face. Johnny had seen guy dogs before, but rarely at his show. Something about this girl’s serene expression amid the chaos of a television taping intrigued him. “I see we have a very special guest in the audience tonight,” Johnny said, pointing toward the fourth row.

“Young lady with a beautiful guide dog. What’s your name?” The girl turned her head toward the sound of Johnny’s voice, her smile growing wider. “My name is Jennifer Walsh, Mr. Carson. And this is Harper.” Harper’s a handsome dog, Johnny said warmly. How long have you two been together? Three years, Jennifer replied, her voice clear and confident.

Since I was 13, he’s my best friend. The audience gave a warm, oh, at this, and Johnny smiled, but what he did next would set in motion a conversation that he’d remember for the rest of his life. Jennifer, I have to ask, you’ve been in our audience for about 45 minutes now. What do you think of the show so far? It was meant as a light-hearted question, the kind Johnny asked all the time. He expected something simple.

It’s great or I love it. What he didn’t expect was the answer that would stop him mid-performance. Jennifer tilted her head thoughtfully, her hand still resting on Harper’s head. When she spoke, her voice was gentle, but carried a weight that seemed to make the entire studio hold its breath. “Mr. Carson, I think your show is wonderful. I really do. I listen to the Tonight Show every single night before bed. It’s my favorite program on television. But if I’m being completely honest, I have to tell you something. I don’t actually know what your show looks like. I don’t know what you look like. I don’t know what your guests look like or what they’re wearing or what’s happening on stage when everyone laughs, but nobody says anything. Half the time I’m laughing because everyone else is laughing, but I don’t actually know what’s funny.”

The studio went completely silent. Johnny’s prepared follow-up question died on his lips. He sat at his desk, staring at this teenage girl who’ just articulated something that had never occurred to him in 20 years of hosting.

Jennifer continued, not in an accusatory way, but with a simple matter-of-fact honesty that made her words even more powerful. “Like right now for instance, based on the silence, I’m guessing you’re doing something with your face. Maybe that eyebrow thing you do that everyone always talks about. But I don’t know. I just know it got quiet.”

Johnny was indeed doing his signature raised eyebrow expression, a gesture so famous that every comedian in America had imitated it at some point, but he’d never considered that it meant nothing to someone who couldn’t see it. “You’re right,” Johnny said quietly into his microphone, his voice uncharacteristically subdued.

“I am doing the eyebrow thing.” “See, now I know,” Jennifer said with a gentle laugh, “but usually I don’t. And don’t get me wrong, I love your show. Your jokes are brilliant. Your interviews are fascinating, and your voice is so warm and welcoming. But there’s this whole other show happening visually that I’m completely missing.”

“The physical comedy, the gestures, the faces people make. My parents try to describe things to me, but you can’t describe everything. Some nights I feel like I’m listening to a radio show that everyone else is watching as a TV show.” Johnny sat down his note cards. His producer was probably panicking in the control booth, wondering why Johnny had abandoned the plan segment. But Johnny didn’t care.

For the first time in his career, he was genuinely shaken by something an audience member had said. “Jennifer,” Johnny said, leaning forward on his desk. “I’ve been doing the show for 20 years. I’ve interviewed thousands of people. I’ve performed for millions of viewers, and in all that time, I never once stopped to think about what my show is like for someone who can’t see it.

“Most people don’t,” Jennifer said kindly. “It’s not your fault. People who can see don’t usually think about people who can’t. It’s just how the world works.”

“But it shouldn’t be”, Johnny said. And there was something in his voice, a mix of shame and determination that made the audience shift uncomfortably in their seats.

“You pay the same money for a ticket that everyone else does. You watch, or rather listen, to the same show everyone else watches. Why should you get half the experience?” Jennifer shrugged with a wisdom beyond her 16 years. “Because that’s just how TV is made, Mr. Carson, it’s a visual medium. It’s not designed for people like me.” Johnny stood up from his desk and walked to the edge of the stage, looking down at Jennifer in the fourth row.

Ed McMahon watched from his seat, having no idea what Johnny was about to do. Neither did anyone else. “What would help?” Johnny asked. “What could we do differently that would make this show more accessible to you?” Jennifer looked surprised, as if she’d never expected anyone to ask her that question, let alone Johnny Carson on live television.

Her mother, seated beside her, put a hand on her daughter’s shoulder, equally shocked. “Well,” Jennifer said slowly, “it would help if someone described what’s happening. Not everything that would be annoying and interrupt the flow, but the visual stuff that’s important. Like when you pointed at me earlier, someone could have said, “Johnny is pointing at you” so I’d know you were talking to me instead of someone near me. Or when you do physical comedy, if you just narrated what you’re doing, even briefly, I’m making a face or I’m doing this gesture or whatever. It doesn’t have to be much, just enough so I’m not in the dark, literally.” She laughed at her own joke and the audience laughed with her, but it was a different kind of laughter than the usual Tonight Show laughter.

It was the sound of people having their eyes open to something they’d never considered. Johnny nodded slowly, processing everything Jennifer had said. Then he looked directly at the camera, addressing not just the studio audience, but the millions of viewers at home. “Ladies and gentlemen, he said, I’ve just been educated by a 16-year-old girl.”

“Jennifer is absolutely right. We’ve been making this show for 20 years without considering that there might be people watching or trying to watch who can’t see what we’re doing. That ends tonight.” He turned back to Jennifer. “Would you do me a favor? Would you stay after the taping and talk to me and my producers about what we could do better? Because I don’t want you to ever have to guess what’s happening on my show again.”

Jennifer’s face lit up with a smile that seemed to brighten the entire studio. “I’d be honored, Mr. Carson.” The audience erupted in applause, and Johnny returned to his desk, but the rest of the show had a different energy. Johnny found himself naturally describing his physical actions. “I’m looking at Ed now. I’m shaking my head.”

“I’m doing an exaggerated shrug”, incorporating Jennifer’s feedback in real time. After the taping, Johnny did something unprecedented. Instead of going straight to his dressing room, he brought Jennifer, her parents, and his production team into a conference room for an hour-ong discussion about accessibility.

Jennifer explained how she experienced television. She described the frustration of loving shows but missing visual elements. She talked about descriptive audio tracks in movies. She suggested television could do something similar. Johnny listened to every word, taking notes, asking questions. His producer, Fred Dordova, initially resistant, gradually came around as he listened to Jennifer’s clear explanations.

“What you’re describing”, Fred said eventually, is basically adding a narrator to our show for visual information. “Not a narrator exactly”, Jennifer clarified, “more like occasional descriptions, just filling in the gaps. It wouldn’t have to be constant, just when something visual happens that’s important to understanding what’s going on.”

By the end of the meeting, Johnny had made a decision that would change television broadcasting across America. Starting the following week, The Tonight Show began incorporating descriptive elements. Johnny would occasionally narrate his own physical comedy or Ed McMahon would briefly describe what was happening on stage.

Gradually, it became more sophisticated. The show worked with the American Council of the Blind to develop best practices. They trained staff on when and how to describe visual elements. They experimented with different approaches, always soliciting feedback from blind viewers. Within 6 months, the Tonight Show had developed a secondary audio program, SAP, that provided audio descriptions for blind viewers.

A trained describer would narrate the visual elements in real time, filling in what Jennifer had called the gaps. But Johnny didn’t stop there. He used his influence in national platform to advocate for broader television accessibility. He testified before Congress about the importance of descriptive programming. He lobbied NBC executives to implement accessibility features across all their programming..

The moment a 16-year-old girl changed television forever.

Last Longer

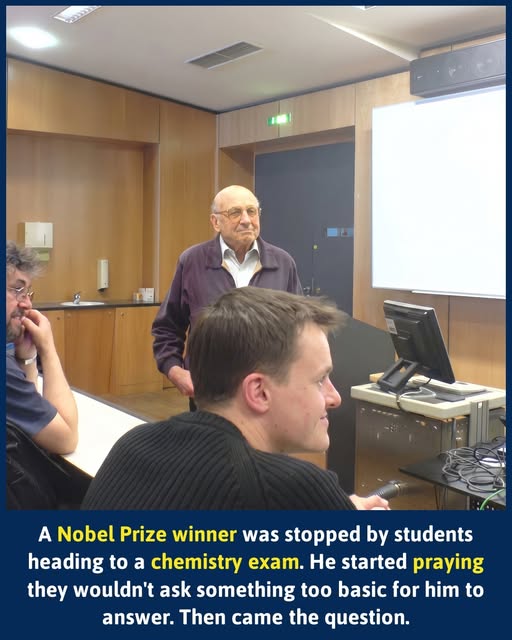

Walter Kohn

The morning after Walter Kohn won the Nobel Prize, he did something remarkably ordinary. He walked across campus.

It was 1998, and the quiet streets of Santa Barbara were buzzing. Kohn had just been awarded the Nobel Prize in Chemistry for his groundbreaking density-functional theory — work that had reshaped the way scientists understood the quantum behaviour of electrons. His face was in the student newspaper. People knew who he was.

Two students spotted him walking in the opposite direction. One of them turned around, jogged back, and asked point-blank: “Are you the guy who won the Nobel Prize?” Kohn said yes. Both students wrapped him in a spontaneous, warm hug — then kept walking.

But then one of them came back.

They were on their way to a chemistry exam, she explained. Could they ask him just one quick question? Kohn said yes — and then, by his own admission, immediately started praying.

Because here was the brutal reality of his situation. Walter Kohn was, at his core, a theoretical physicist. Yes, he had won the Nobel Prize in Chemistry, but his work lived in the rarefied air of advanced quantum mechanics — the kind of science that operates at the bleeding edge where chemistry and physics blur into one. Ask him about electron density approximations? Brilliant. Ask him what happens in a first-year general chemistry lab? Suddenly the Nobel laureate is sweating.

The more basic the question, he knew, the more likely he was to have absolutely no idea how to answer it.

So he stood there on that sun-drenched California footpath, a man whose name would now be spoken alongside Curie, Bohr, and Pauling, silently begging the universe to throw him a lifeline.

And then she asked the question.

Kohn listened carefully. And something clicked. The question wasn’t really a chemistry question at all — it was a physics question. Right in his wheelhouse. The exact territory he had spent decades mastering.

He gave them, by his own cheerful assessment, a brilliant answer. The students were genuinely impressed. They headed off to their exam. And Walter Kohn walked on across campus, relieved, amused, and perhaps reminded that even a Nobel Prize comes with no guarantee you know what’s on somebody else’s test.

Image Credit to Jtk33 (Wikimedia Commons) (Restored & Colorized)

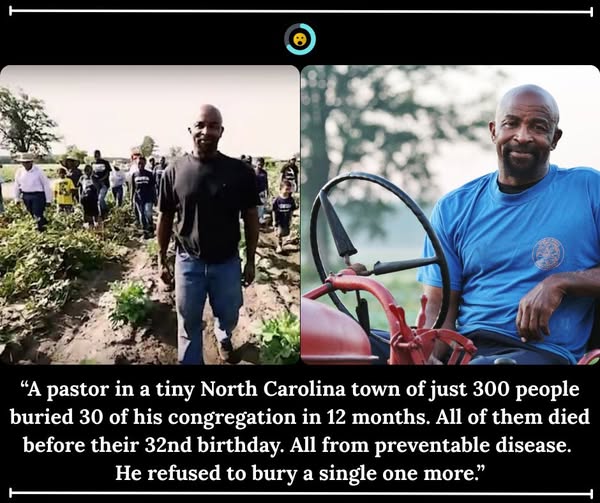

Richard Joyner

The town of Conetoe, North Carolina barely exists on a map. Population, 300. Mostly poor.

The nearest grocery store sits 10 miles away. That’s what a food desert looks like – farmland stretching in every direction, and not a single fresh vegetable within easy reach.

1986. Conetoe, North Carolina.

Richard Joyner already knows this land. He grew up here – one of 13 children in a sharecropping family – and spent every summer bent over crops under the eastern North Carolina sun. The moment he turned 18, he joined the Army and left. He swore he would never come back.

But he came back.

He came back to lead Conetoe Chapel Missionary Baptist Church. And in a town this small, serving a congregation means standing at the graveside more than anyone should ever have to.

The deaths come early and often. Diabetes. High blood pressure. Obesity. Edgecombe County ranks 97th out of 100 North Carolina counties in health and economic well-being. These diseases don’t wait for old age here.

2005. One year. 30 funerals.

In a single 12-month stretch, Joyner buries 30 members of his congregation. Not elderly men and women at the end of long lives. These are people under the age of 32. Every single death is preventable.

“Diabetes, high blood pressure – when we first got started, we counted 30 funerals in one year,” he says. “I couldn’t ignore it anymore. I was spending more time at funerals than anywhere else.”

Here’s what makes it worse, the town is completely surrounded by farmland. Food grows in every direction. But none of it reaches the 300 people who live here. The nearest grocery is 10 miles down the road, most families have no reliable way to get there, and what’s cheap at the corner store is almost never fresh. So people eat what they can afford. And they keep dying young.

Joyner looks out at his congregation every Sunday and sees what is coming. People he loves. People 100 pounds overweight, moving slower each week, their bodies giving up piece by piece. He knows exactly what happens next if nothing changes.

“It just started to feel unconscionable,” he later says, “that you would see someone 100 pounds overweight on Sunday and not say anything about it.”

He decides to stop being quiet. And then he decides to do something.

2007. An empty church lawn. A completely different idea.

Joyner walks outside and starts to dig. He turns the grass around the church into a garden – rows of vegetables, herbs, and fruit. Then he makes a decision nobody sees coming, he goes looking for the kids.

Not the easy ones. He goes after the ones failing in school. The ones drifting toward trouble. The ones with nowhere safe to be after 3 p.m. He puts a shovel in their hands. He teaches them how soil works, how seeds grow, how a living thing needs tending every single day. He makes them responsible for something alive. Something that needs them.

One boy arrives – restless, struggling with attention, full of energy with nowhere to go. Joyner looks at him and says, “Get out in the field and have fun.”

The boy pauses. “Can I take my shoes off?”

Joyner grins. “Yeah, pull your shoes off.”

The boy sprints barefoot through the rows, crouching down to press his fingers into the dirt, tasting raw vegetables for the first time in his life. Over the months that follow, his teachers watch something change. His focus sharpens. His grades climb. His whole way of moving through the world shifts.

This is what the garden is actually growing.

Today. An oasis where there used to be only grief.

The Conetoe Family Life Center now manages more than 20 plots of land – including a 25-acre site. More than 80 young people help plan, plant, and harvest. They manage beehives, produce honey, and pollinate the crops themselves. Together they grow tens of thousands of pounds of fresh food every year – all of it given away, free, to families who need it most. Roughly 1,500 people are fed every single week.

In 2015, CNN named Richard Joyner one of its Top 10 Heroes of the year. The center has expanded to 21 locations across 4 counties – and it has united Baptists, Muslims, and Unitarians, all working side by side in the same dirt.

“We can grow more medicine through the plants than we can buy,” Joyner says. “And there are no side effects.”

He took the land his family was once forced to work as sharecroppers – land soaked in generations of injustice – and turned it into something new entirely. A place where children learn their own power. Where a community decides it will no longer eat badly and die young.

The funerals didn’t stop. But the preventable ones? That’s a very different story now.

Share this with someone who needs to be reminded that one person – with a shovel, a church lawn, and a heart that refuses to quit – can change the course of an entire community.

Motivation, Discipline and Willpower

I already have a blog post on how to work out your basic purpose in life which I heartily recommend you read soon.

https://www.tomgrimshaw.com/tomsblog/?p=37862

I am told it was Confucius who said, “Do something you love to do and you will never work a day in your life.”

My reality is, when you are working on your basic purpose it is almost like you are being paid to have fun.

I often say to people, “When you are on your Basic Purpose, progress is more like a hot knife through butter than walking through molasses in the middle of winter.”

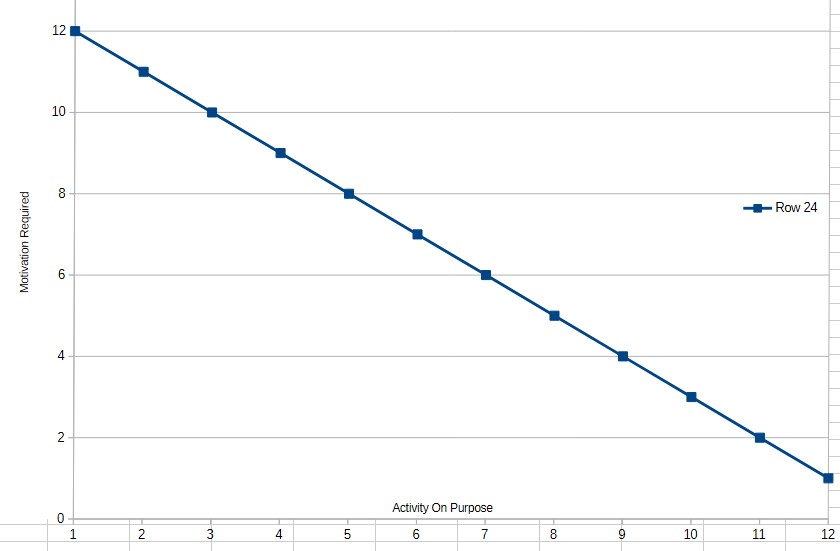

In my experience there is a particularly strong inverse relationship between “Purpose and Motivation Requirement”.

The more aligned an activity is with your:

basic purpose

identity

meaning

values

curiosity

mastery

contribution

or intrinsic enjoyment

the less external motivational force is required.

This could be represented by a graph with ‘on Purpose’ on the horizontal axis and ‘Necessity to Bootstrap Your Motivation’ on the vertical axis. The line would start at top left and progress on a straight line to bottom right indicating the more ‘on purpose’ are people’s activities, the less they needed to try to motivate themselves.

The more people are meaningfully engaged, the less exertion feels like “effort” or work and the more it feels like flow or fun.

You could even visualise it something like:

Top Left:

“Maximum motivational force required”

drudgery

coercion

meaningless labour

externally imposed goals

Bottom Right:

“Self-sustaining engagement”

vocation

calling

obsession

creative flow

mission

Purpose does not eliminate difficulty, it changes the relationship to difficulty as even purpose-driven work still contains:

administration

repetition

maintenance

frustration

uncertainty

and sacrifice

A parent caring for a child may be exhausted but still deeply willing.

An entrepreneur building a mission-driven company may work extremely hard without perceiving themself as oppressed by the work.

That distinction matters.

One useful framing is that motivation is multi-faceted. Different activities are powered by different energy sources.

| Source | Stability | Example |

|---|---|---|

| Fear | Short-term | Avoid punishment |

| Reward | Moderate | Earn treat/money/status |

| Obligation | Moderate | Duty/responsibility |

| Identity | Strong | “I am this kind of person” |

| Purpose | Very strong | Meaningful mission |

| Love/Curiosity | Extremely strong | Intrinsic engagement |

The higher the source, the less conscious willpower is required.

But we are not all blessed enough to be working on our basic purpose right now. So we need ways to feel more motivated towards the task at hand. One way is to look at the product of the activity rather than the task. Another is to offer yourself a reward for completing the task. As discussed in the blog post ‘Systematizing Willpower’ there is also constructing a framework that makes it easier to do the task rather than not do it.

Motivation vs Discipline vs Willpower

These are often confused.

Motivation is the emotional desire to act.

It is useful but fluctuates heavily.

Discipline is conditioned consistency of behaviour.

Doing things whether emotionally inclined or not.

Willpower is the short-term override capacity.

The ability to resist impulses or force action temporarily.

Willpower is best viewed as:

finite

exhaustible

and unreliable if overused

Which is why a systems approach is so important.

The Problem With “Try Harder”

Many motivational systems fail because they rely on continual conscious exertion but humans are not designed for perpetual self-coercion.

A better strategy is:

reduce friction toward desired behaviours

increase friction toward undesired behaviours

automate good defaults

attach behaviours to identity and meaning

In other words it is vastly more sustainable to construct environments where the right behaviour is easier than the wrong behaviour.

Decision

Just a quick word here on the subject of decision. I have sometimes pulled myself up after observing that I had not done something for some time and realised I had been aware of the necessity to do a certain task without actually making the decision to do it. These things take up mental memory as unfinished tasks. They occupy your head space without paying rent! Just like a bad tenant they need to be moved on!

The Four Major Levers of Sustainable Motivation

1. Purpose and Meaning

These are the strongest long-term drivers.

Questions that increase motivation:

Why does this matter?

Who benefits?

What larger goal does this serve?

What future does this create?

Humans can tolerate enormous effort if the effort feels meaningful. Without meaning, even light work becomes draining.

2. Vision the Outcome

Focusing on the product rather than the task is extremely powerful. A bricklayer may consider “I am stacking bricks.” or “I am building a cathedral.” The physical actions may be identical but the mental experience is radically different.

Visualization techniques work partly because they focus on futue emotional gratification rather than present effort. They minimize present discomfort by concentrating on future reward.

Examples:

athlete visualising victory

dieter visualising health

entrepreneur visualising impact

student visualising competence

The mind tolerates present sacrifice more readily when future value feels vivid.

3. Reward Systems

Immediate rewards help bridge the gap between:

present effort

and delayed benefit

Because humans are strongly biased toward immediate gratification.

Useful rewards

breaks

favourite drink

music

recreation

social reward

tracking streaks

visible progress markers

Rewards work best when

modest

immediate

and linked clearly to completion

4. Environmental and System Design

Is porobably the most underrated factor. Behaviour is highly situational. People often overestimate character and underestimate environment.

Examples:

Factors That Increase Desired Behaviour

Prepare workspace in advance

Put gym clothes beside bed

Keep healthy food visible

Use checklists

Schedule tasks into calendar

Batch similar tasks

Reduce startup friction

Factors That Decrease Undesired Behaviour

Remove distracting apps

Use website blockers

Keep phone in another room

Disable notifications

Add accountability

Increase effort required for bad habits

The goal is to make good behaviour:

obvious

easy

automatic

and repeatable

Identity-Based Motivation

People defend identity remarkably strongly. One of the strongest modern insights is that behaviour tends to stabilize around identity.

Instead of “I want to write.” the stronger frame is “I am a writer.”

Instead of “I should exercise.” the better alternative is “I am someone who trains.”

This shifts behaviour from externally forced to internally coherent.

Momentum and Activation Energy

Starting is often harder than continuing.

Many tasks have high:

emotional resistance

uncertainty

cognitive startup cost

Once begun, resistance falls sharply so effective systems reduce “activation energy.”

Examples:

Commit to 5 minutes only

Open the document

Put on shoes

Write one sentence

Do one push-up

Action frequently generates motivation more reliably than waiting for motivation to generate action.

The Motivation Trap

Many people wait to feel motivated before acting. They natively think: Motivation > Action > Progress

But in practice the sequence is often: Action > Progress > Motivation

Progress itself is motivating.

Which is why:

checklists

visible tracking

completion markers

streaks

and milestones

are effective progress boosters.

The Importance of Friction

A surprisingly useful concept is that tiny frictions dominate behaviour.

Examples:

one extra click

needing a password

shoes not nearby

unclear next step

cluttered workspace

Similarly:

tiny conveniences encourage action.

The practical implication:

small environmental modifications can outperform large amounts of willpower.

Emotional Resistance

Often “lack of motivation” is not laziness. It may actually be:

fear of failure

fear of judgement

overwhelm

perfectionism

ambiguity

lack of clarity

or lack of emotional reward

Sometimes the solution is not “motivate harder” but “reduce psychological threat.”

Breaking tasks into smaller pieces is powerful partly because it reduces perceived danger and uncertainty.

Rest and Recovery

Motivation collapses without recovery. Sleep deprivation, chronic stress and cognitive overload reduce:

impulse control,

emotional stability,

persistence,

focus,

and optimism.

Many people try to solve exhaustion with discipline. That usually fails. Biology eventually overrides ideology.

Social Reinforcement

Humans are deeply social.

Motivation increases when behaviour is:

visible

shared

encouraged

or culturally reinforced

Examples:

workout partners

writing groups

public commitments

mentoring

accountability systems

Isolation weakens persistence for many people.

Perhaps the Most Important Principle

The ultimate goal is not maximum self-coercion it is alignment.

Alignment between:

basic purpose

values

identity

goals

environment

incentives

habits

and behaviour

When alignment is high:

less willpower is needed

less internal conflict exists

and effort becomes far more sustainable

At the extreme end, people sometimes experience:

vocation

calling

mission

or devotion

At that point motivation becomes almost self-fueling.

Action recommendations:

0. Make a list of your incomplete tasks or projects.

For any area where you experience anything other than full motivation or drive:

1. Work out the end product of the activity.

2. Specify how it aligns with your goals and purposes.

3. Decide on a reward for completing that task.

4. Identify actual or potential friction points and implement a convenience that eliminates the friction point.

5. Make the decision you are going to achieve the end-product of the activity.

6. Set a target date and time for the completion of it.

7. Set a starting time for it. Even if it is only for 50-15 minutes of allocated time.

Lastly, recognise that there is a part of the mind set up to help you fail and that it is a non-ending source of resistance to your success. Do not be surprised or dismayed when you experience thoughts counter to your intentions. Some of the above recommendations are ‘work arounds’ to help you overcome the mental resistance. The optimum solution is to remove that part of the mind, the source of the counter-intention. Can you imagine how freeing that would be?

Time Management

If you want to improve your time management, the very first thing to do is to ‘come off automatic’, to start to make conscious, deliberate decisions about where you invest your daily minutes rather than just ‘going with the flow’.

To help do this I recommend you take stock of where you are investing your time at present. This is one of the highest-leverage things a person can do. Most people feel short of time but have never actually measured where it goes.

There is an old management principle often attributed to Peter Drucker, “What gets measured gets managed.”

Time tracking is useful because it often reveals:

hidden time drains

context-switching costs

optimistic self-estimates

emotional avoidance patterns

and activities that give very poor return for the time invested

A practical system usually works best when it combines:

1. Measurement

2. Classification

3. Review

4. Adjustment

Here are some tools and methods, from simplest to most sophisticated.

1. The Notebook Method (Surprisingly Effective)

Carry a notebook or use a notes app.

Every 15–30 minutes, write:

time

activity

optional energy/mood score

Example:

7:00–7:30 Breakfast + news

7:30–8:10 Emails

8:10–9:40 Deep work: proposal

9:40–10:15 YouTube drift

This works because:

it is frictionless

creates awareness

and immediately reduces unconscious behaviour

A variation is to use categories:

Work

Admin

Learning

Family

Entertainment

Exercise

Social media

Travel

Sleep

2. Spreadsheet Tracking

Good for analytical personalities.

Columns:

| Start | End | Activity | Category | Energy | Value |

| —– | — | ——– | ——– | —— | —– |

Additional useful ratings:

Importance (1–5)

Enjoyment (1–5)

Return on Time (low/medium/high)

After a week, patterns emerge quickly.

Many people discover:

2–4 hours/day vanish into reactive behaviour

interruptions are worse than expected

high-value work occupies surprisingly little time

3. Pomodoro + Logging

The Pomodoro Technique combines:

focused work blocks

timed breaks

and implicit tracking

Typical structure:

25 minutes focused work

5 minute break

after 4 cycles take a longer break

Each completed session is logged.

Advantages:

improves focus

creates measurable output

helps estimate real task duration

4. Digital Time Tracking Apps

These automate much of the process.

Popular tools include:

[Toggl Track](https://toggl.com/track/)

Excellent for manual time tracking and reporting.

[RescueTime](https://www.rescuetime.com/)

Automatically tracks computer/app usage.

[Clockify](https://clockify.me/)

Free and strong for projects/categories.

[Timeular](https://timeular.com/)

Physical tracking device + app.

[Forest](https://www.forestapp.cc/)

Gamifies focus sessions.

[Notion](https://www.notion.so/)

Flexible dashboards and habit/time systems.

[Obsidian](https://obsidian.md/)

Powerful for reflective tracking and journaling.

5. Passive Digital Tracking

Sometimes people resist logging manually.

Passive monitoring tools reveal:

websites visited

app usage

screen time

pickup frequency

notification interruptions

Useful built-ins:

[Apple Screen Time](https://support.apple.com/en-au/guide/iphone/iphb0c7313c9/ios)

[Android Digital Wellbeing](https://wellbeing.google/)

Browser extensions like:

[StayFocusd](https://www.stayfocusd.com/)

[LeechBlock NG](https://www.proginosko.com/leechblock/)

These are particularly valuable because self-estimates of screen usage are often wildly inaccurate.

6. Energy Tracking (Often More Important Than Time)

Two people can both work 8 hours:

one produces enormous value,

the other burns time inefficiently.

So some systems track:

energy

clarity

motivation

stress

cognitive sharpness

Example:

| Time | Activity | Energy |

| —- | ——– | —— |

| 8am | Writing | 9/10 |

| 2pm | Admin | 4/10 |

Patterns emerge:

best creative hours

best analytical hours

when breaks are needed

what activities drain energy

This can radically improve scheduling.

7. Outcome-Based Tracking

This is more advanced. Instead of tracking “How long did I work?” track “What meaningful outcomes were produced?”

Examples:

pages written

sales calls completed

designs finished

exercise sessions done

lessons learned

problems solved

This prevents:

“productive-looking busyness.”

8. Weekly Review Systems

Tracking alone is not enough. The real gains come from review. A weekly review might ask:

What consumed the most time?

What created the most value?

What felt wasteful?

What should be automated?

What should be delegated?

What should be eliminated?

Which activities restored energy?

Which drained it?

Without review, people often collect data but change nothing.

9. Time Auditing Categories

A useful framework is to classify activities into:

| Category | Meaning |

| ———– | —————————— |

| Investment | Builds future capability/value |

| Maintenance | Necessary upkeep |

| Consumption | Entertainment/rest |

| Waste | Little or no value |

The goal is not eliminating all consumption:

rest

recreation

socialising

and reflection

as these are essential

The goal is reducing unconscious waste.

10. Environmental Design

One of the strongest insights in behaviour management is:

people often do not need more discipline — they need better environments.

Examples:

phone in another room

website blockers

scheduled email windows

prepared workspace

default routines

checklists

batching similar tasks

This aligns closely with creating systems that channel behaviour toward optimum outcomes rather than relying on continual willpower.

11. Common Discoveries People Make

After tracking for 1–2 weeks, people commonly discover:

interruptions are devastating

multitasking is inefficient

small distractions accumulate enormously

reactive communication dominates the day

sleep affects productivity more than expected

and a few activities generate most results

Often the solution is not “work harder” but “remove friction and low-value activity.”

12. A Very Simple Starter System

If someone is overwhelmed, I would suggest:

For 7 days:

Track only:

Start time

End time

Activity

Then review:

What surprised you?

What should increase?

What should decrease?

Simple systems are far more likely to be sustained. Overly elaborate systems often collapse under their own administration overhead.

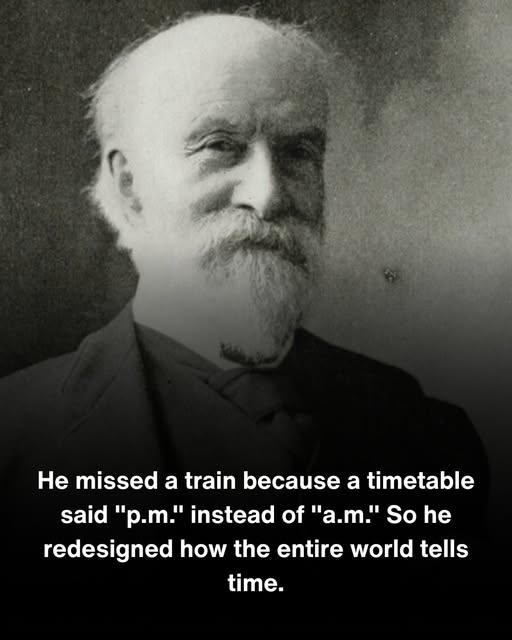

Sandford Fleming – Time Zones

It was the summer of 1876, and Sandford Fleming was stranded.

He stood in a small railway station in Ireland, staring at a schedule that had cost him everything: his connection, his plans, and now his evening. The timetable clearly listed the train to Londonderry at 5:35. What it failed to mention was that it meant morning, not afternoon. By the time Fleming arrived expecting an afternoon departure, the train had been gone for hours./p>

A printing error. Two tiny letters — a.m. written as p.m. — and here he was, settling into an uncomfortable waiting room for what would become a very long night.

Most people would have cursed, written an angry letter, and moved on.

Fleming was not most people.

He was already one of Canada’s most accomplished engineers — a Scottish immigrant who had arrived at eighteen with little more than ambition and a surveyor’s training, and built a career designing railways, postage stamps, and even an early prototype of inline skates. But as he sat in that cold Irish station, watching the hours drag by, something larger began forming in his mind.

The missed train wasn’t really about a printing error.

It was about a broken world.

In the 1870s, virtually every town on Earth kept its own time, set by when the sun reached its highest point in the sky. This had worked perfectly for centuries, when most people never traveled far from home. But railways had changed everything. A train could now carry passengers hundreds of miles in a day, through dozens of towns each ticking away on their own local clocks. In North America alone, there were more than one hundred different local times in use. A traveler crossing the continent needed to reset their watch at nearly every stop.

Railway companies had tried to solve this by creating their own “railroad time” — but with dozens of competing lines, this only created more confusion. Some major stations displayed several clocks at once, each showing a different time for a different railway. Trains occasionally collided because engineers were operating on different standards. Scientists couldn’t coordinate astronomical observations because observers in different cities couldn’t agree on what time an event had occurred.

The world had built a modern transportation system on top of a medieval approach to time.

Fleming spent that long night in Ireland thinking. And then he spent the next several years doing something about it.

He proposed dividing the entire world into twenty-four time zones — one for each hour of the day — each spanning fifteen degrees of longitude. Within each zone, every clock would show the same time. Between zones, the difference would always be exactly one hour. The zones would be labeled alphabetically, A through Y, with G designating the zone aligned with Greenwich, England.

To prove the concept was real, not just theoretical, Fleming commissioned a custom pocket watch around 1880 — now held at the Smithsonian’s American History Museum. One side showed conventional 12-hour time. The other showed his new 24-hour “Cosmic Time” system, with alphabetical zone markers. He carried his solution in his pocket.

He spent years as an evangelist for the idea: presenting papers at international conferences, lobbying railway companies, and building a coalition of scientists, engineers, and government officials across the globe. In November 1883 — a day that became known as “The Day of Two Noons” — American and Canadian railways simultaneously synchronized their clocks to four standardized continental time zones. Some cities experienced noon twice that day as the old system gave way to the new.

The following year, forty-one delegates from twenty-five nations gathered in Washington, D.C., for the International Meridian Conference. After considerable debate, twenty-two nations voted to adopt the meridian passing through the Royal Observatory at Greenwich, England, as the global standard for zero degrees longitude — the foundation of the system we use today.

Fleming’s original dream of a single universal “Cosmic Time” was never adopted. But his framework of twenty-four hourly zones became the backbone of modern Coordinated Universal Time. His alphabetical zone labels survived too: in aviation and the military, “Zulu Time” — Z for zero meridian — remains the global standard.

The man who missed a train because of two wrong letters had given the world a common language for time itself.

Today, when you check what time it is in another country, when airlines synchronize international schedules, when financial markets trade across continents in real time — all of it traces back to one long, uncomfortable night in an Irish railway station, and a man who refused to accept that the problem was simply bad luck.

He was the wrong person to strand in a waiting room.

He had too much time to think.

The Things Left

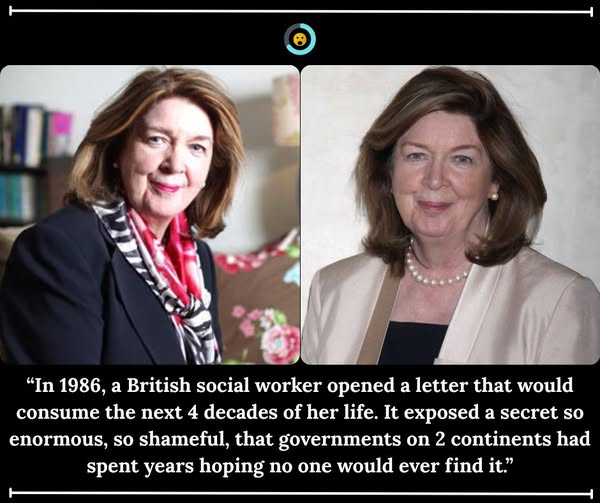

Margaret Humphreys

1986. Nottingham, England.

Margaret Humphreys is a social worker. She is not famous. She has no political connections, no private funding, and no reason to believe that a single letter from a stranger in Australia is about to change her life forever.

The letter is from a woman who says that at the age of 4, she was placed on a boat by the British government and shipped to a children’s home in Australia. She was told her parents were dead. She grew up an orphan on the other side of the world.

Now she is an adult. She wants to know if any of her family is still out there.

Humphreys agrees to investigate. She expects to spend a few weeks searching records and confirming what the woman already suspects — that her parents are gone.

Instead, she finds the woman’s mother. Alive. Living less than an hour from Nottingham.

The woman’s parents were never dead. They were never even told where their child had been sent.

The Secret That Had Been Hidden in Plain Sight.

Humphreys begins pulling on the thread. What unravels is one of the most shocking government programmes in British history.

For over 100 years – from the 1860s all the way to 1970 – the British government and a network of charities and religious organisations had been systematically removing children from care homes and shipping them to Australia, Canada, and other Commonwealth nations. The children were told they were orphans being given a better life.

Most of them were not orphans. They had living parents. They had siblings still in England.

They had families who had surrendered them temporarily during times of poverty or illness, fully expecting to be reunited.

Nobody told the parents where their children went. Nobody told the children their parents were alive.

More than 130,000 children were transported. The youngest were as young as 3 years old.

Here’s what makes it worse, many of the institutions receiving these children in Australia were run by religious orders who used the children as cheap labour. Boys worked farm fields from before sunrise. Girls cleaned and cooked for institutions that kept them entirely cut off from the outside world. Some were denied any education at all. Investigators would later describe what happened in those institutions as “widespread and systematic sexual abuse.”

The children were told they were the sons and daughters of whores. That they were worthless.

That nobody back in England loved them or wanted them back.

Many of them believed it for the rest of their lives.

1987. Humphreys’ Living Room, Nottingham.

After traveling to Australia and posting newspaper advertisements asking for former child migrants to come forward, Humphreys is overwhelmed by the response. At first it is a trickle.

Then it becomes thousands.

She establishes the Child Migrants Trust – initially from her own home, with her husband Mervyn as her closest support – and registers it as a charity in both Australia and Britain.

She has no government backing. No institutional support. The organisations responsible for the scheme – including powerful church bodies and charities – are not remotely pleased to see her digging.

She faces legal pressure. She faces institutional stonewalling. Files go missing. Doors are closed. She is one social worker from Nottingham going up against organisations that have decades of experience in making things disappear.

She does not stop.

The Work.

For the next 23 years, Humphreys travels constantly between Nottingham, Western Australia, and Victoria, combing through emigration records, church ledgers, government archives, and institutional files that were never designed to be found by people like her.

She reunites more than 1,000 individuals with their biological families in those first decades alone. Every reunion is its own extraordinary story. Elderly parents meet children they last saw as toddlers. Brothers and sisters discover each other after 40 or 50 years of believing the other was gone. Middle-aged adults finally learn their own real names.

Some of those parents are in their 80s and 90s by the time Humphreys reaches them. Some die before she can bring their children home.

In 1993, the Australian government awards her the Medal of the Order of Australia – one of the country’s highest civilian honours – for her services on behalf of the child migrants.

In 1994, she publishes her full account in a book called Empty Cradles. It causes a national outcry in Britain.

The Apologies.

It takes 23 years of campaigning to force a government to say sorry.

In 2009, the Australian government issues a formal national apology to all former child migrants for the suffering caused by the scheme.

In 2010, British Prime Minister Gordon Brown stands before Parliament and delivers an official apology on behalf of the United Kingdom. He acknowledges the “misguided” programme that unjustly broke up thousands of families and caused immeasurable harm to the children caught inside it.

A few years later, Humphreys is appointed CBE – Commander of the British Empire. The same empire that once shipped children across the world to serve it.

The film Oranges and Sunshine, released in 2010 and starring Emily Watson as Humphreys, brings the story to a global audience for the very first time.

What She Found When She Started Pulling That Thread.

Margaret Humphreys was not a detective. She was not a barrister or a politician or a crusading journalist. She was a social worker who opened a letter and decided that the person inside it deserved to know the truth.

She found over 130,000 reasons why that decision mattered.

The Trust she built from her living room is still operating today – still reuniting families, still supporting survivors, still running offices in England and Australia. Because the work is not finished. Some of those stolen children are still searching. Some are still waiting.

1 letter. 1 social worker. 1 decision to follow the truth wherever it led.

Share this with someone who believes that ordinary people can change the world – because Margaret Humphreys proves it, 1 family at a time.